Best Practices for Deploying and Scaling Embedded Analytics: Part 1

Like every other feature in an application, embedded dashboards and reports are constantly evolving. Once-modern capabilities like embedded self-service analytics are now commonplace—and sophisticated capabilities such as adaptive security and write-back are taking analytics far beyond basic dashboards and reports.

As embedded analytics becomes increasingly complex, deploying and scaling it also gets more complicated. Keep these considerations in mind as you embed analytics in your applications:

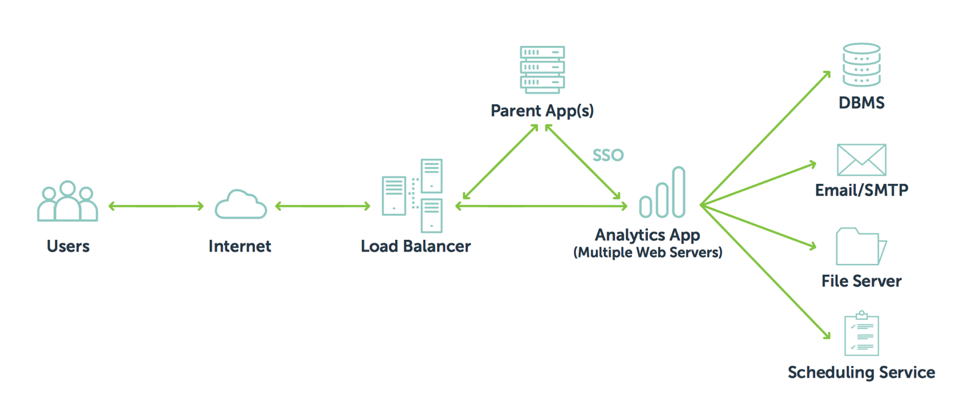

Environmental Architecture

An embedded analytics architecture is similar to a typical web architecture in that you have a web application communicating with another parent application. But to best serve your users, you also need a sound database with schema designed for analytics. Some of the strategies for an analytics-oriented database schema include flattened and aggregated tables.

Application Design

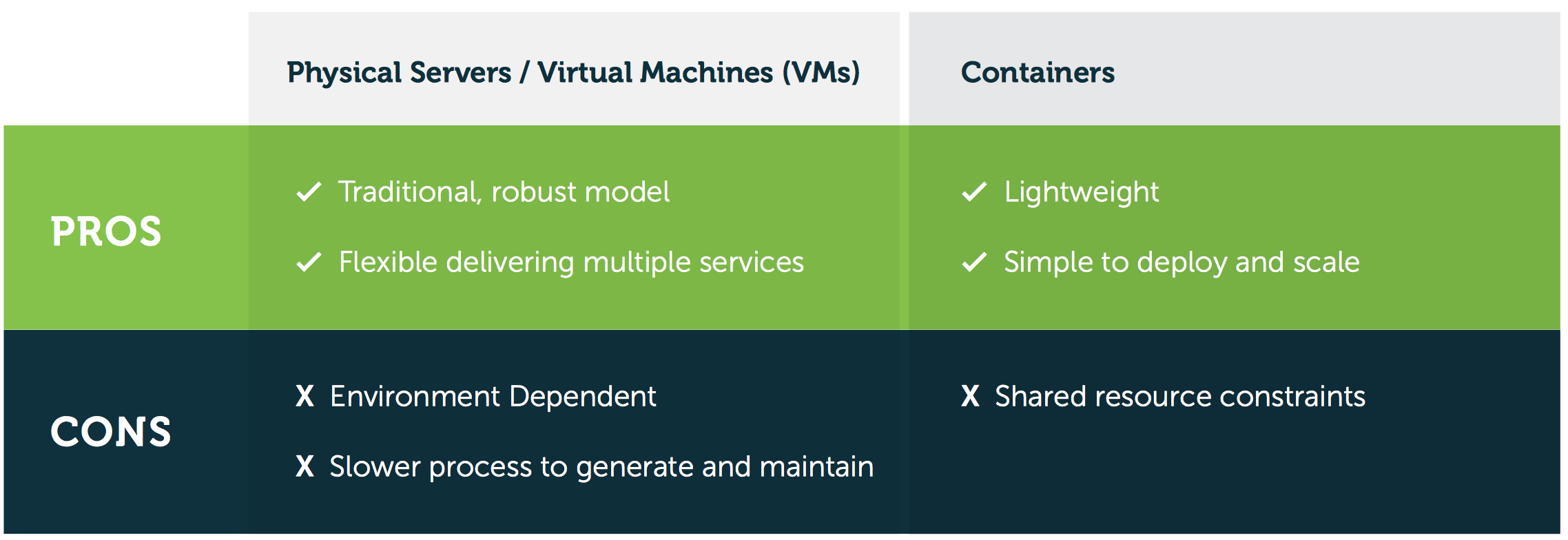

The next step is to consider how to package and host your analytics application. Traditionally, the application is hosted on a physical server or a virtual machine (VM), though some application teams are now migrating to containers. Your decision should be based on your company’s specific needs:

-

- Containers are particularly useful for microservice-oriented architectures with distributable services. They’re also good for prototyping and can be replicated relatively quickly. However, their file storage is not persistent and requires external tools to support.

- Virtual machines are good at supporting monolithic application architectures as well as handling multiple functionalities. You can package multiple services and capabilities and still deliver in a load balance environment. The drawbacks are that they are environment dependent and their turnaround time is slightly longer than containers.

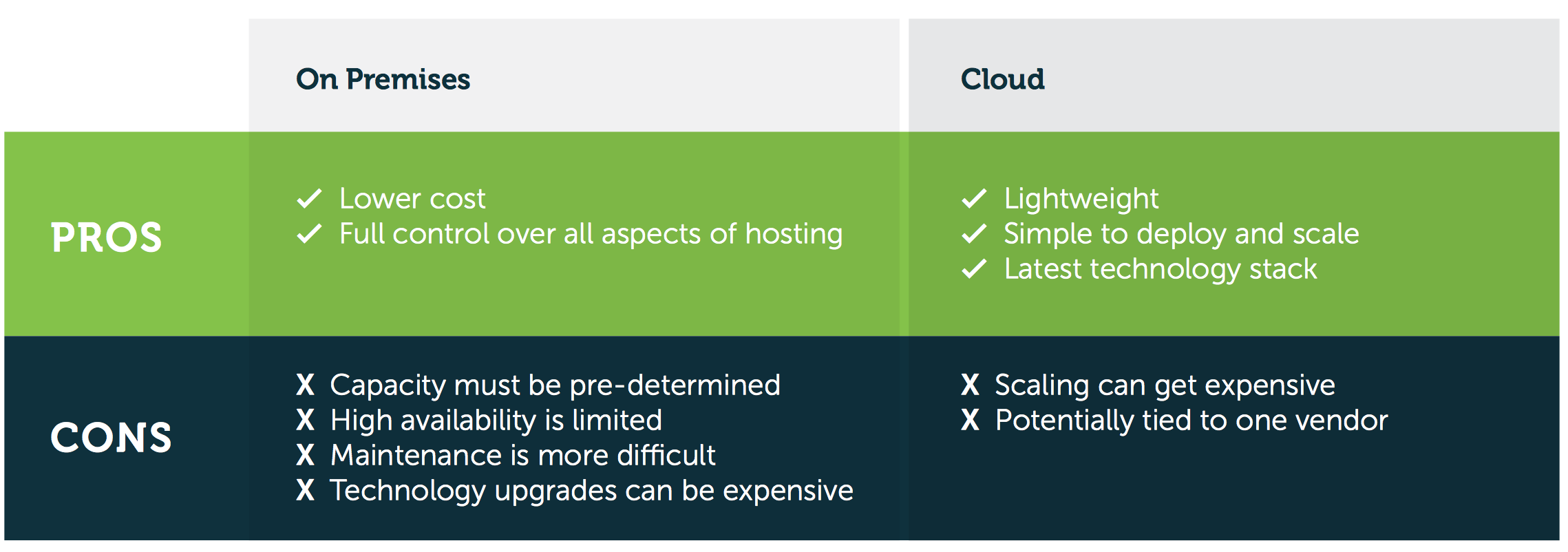

Hosting On-Premises vs. Cloud

In a typical environment for embedded analytics, you either host the delivery mechanism or you might provide it as a service to your end customers. Though on-premises hosting is the best option for delivering mission-critical services, the ease of deployment and maintenance of cloud hosting makes it the better option for embedded analytics.

The main benefit of hosting your application within your own environment or data center is it costs less. However, the turnaround time to procure and deliver on hardware is longer, and the hardware itself is more difficult and expensive to upgrade. Crucially, scaling is less straightforward than in the cloud because you will have to pre-determine the size of your environment, or risk being limited in the availability of your application.

Hosting in the cloud makes scaling considerably easier. All you have to do is script it out, request a virtual machine (or docker containers), and within a matter of minutes your environment has scaled to cope with additional traffic. While the cloud often has a low cost of entry, as you grow and scale your costs may increase and you’ll become more heavily reliant on your vendor. But the cloud solves many of the drawbacks of the on-premises model. Because it makes scaling easy, cloud hosting is recommended—as long as you keep an eye on expenses.