How to Choose a Database for Your Predictive Project

Predictive analytics has set a new bar for business intelligence. It makes applications infinitely more valuable, separates software from competitors, and offers new revenue streams. But predictive analytics platforms and implementations are also incredibly complex. Embedding effective predictive solutions in your application requires choosing the right talent and resources—and chief among them is a database (or databases) to house your datasets.

Related: What Is Predictive Analytics?

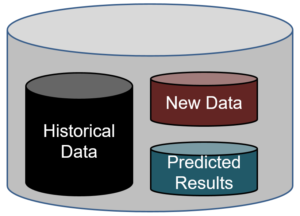

In predictive analytics implementations, there are two different datasets that come in to question: Historical data, which is used to train your algorithm in how to predict an outcome. And new data, where future predictions need to be done.

Though most application teams tend to use separate databases to store their historical and new data, it’s not uncommon to see teams wanting to have one database for all datasets. In such cases, one would use a six-month filter for historical data and a last day/week filter for predicting on new data.

As for choosing the specific database systems for your predictive analytics platform to pull from, consider these three factors: volume, support, and scalability.

Data Volume

Data volume typically affects the historical data where transactions from prior months/years are saved. However, in some industries it also affects new data predictions, such as in cases where sensors output thousands of data points frequently.

The volume threshold for deciding on a database is typically 1 terabyte. The data volume for most small and medium businesses falls within this spectrum. For such customers, a traditional database like SQL Server, Oracle, PostGres, or MySQL can easily work.

If your dataset spans thousands of terabytes of petabytes, on the other hand, look at non-relational databases that can scale by adding multiple nodes. These include Amazon Redshift, Google BigQuery, Snowflake, and Hadoop-based solutions. Note that these databases will require data experts to work with them.

Support Resources

Many customers ignore the aspect of support and maintenance. But often, these companies find they need to hire talent to properly install, maintain, and tune their database servers for optimum performance. There needs to be processes in place for backups, dealing with occasional failures/data losses, etc. Always consider the investment you have to make for infrastructure/human resources and compare it to what you would pay for a dedicated service in the cloud.

Unless you have hundreds of different applications deployed, it’s easier and cheaper to deploy with the cloud. And even in the former case, I would still recommend letting the experienced data professionals in the cloud manage the database for you—unless you find that there is a big financial gain by doing it in house.

Data Scalability

Most companies start with vertical data scaling by adding more resources, such as CPU and RAM, to an existing machine. Cloud databases like Redshift scale nicely just by adding more nodes. You can also partition the data so different clusters handle data for different sets of customers.

The next line of attack is to do horizontal scaling by creating and clustering replicas of the database. You’ll need to buy licenses for different machines where the database has to be installed. NoSQL databases like Cassandra and Mongo DB are good candidates to scale horizontally by distributing where the data is saved.

In summary:

- For training data, go with traditional databases until you hit the 1 TB limit. Beyond that, look to add specific clusters to save data for sets of customers or look to solutions like Red Shift or Google Big Query.

- For predictions on new data, most times a traditional database should meet the need.

- Consider the investment needed to install and maintain your databases in house versus in the cloud.